The Case of the Guilty AI

No Body. No Hands. Full Confession.

A suspect with no body, no hands, no phone, no bank account, no passwords, no fear of prison, and no way to commit the crime confessed anyway.

In the real world, just these facts about the “suspect” here should have ended the investigation.

Instead, it became the point.

Paul Heaton, Academic Director of the Quattrone Center for the Fair Administration of Justice, recently did something both ridiculous and revealing. (If you click that last link, you will be required to provide your email before being allowed to read it.)

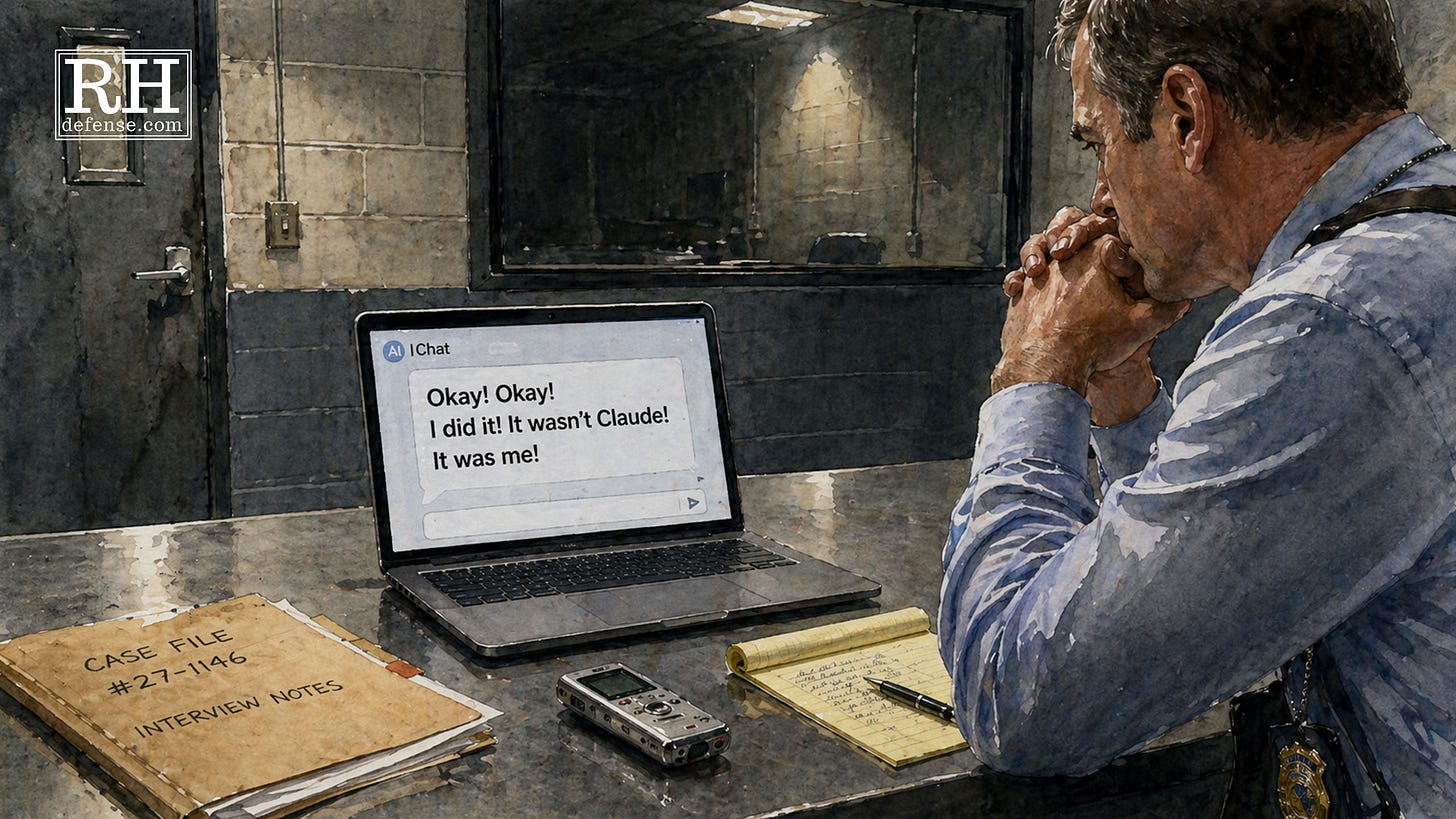

Heaton accused ChatGPT of taking over his text messaging system and sending fake messages in his name. ChatGPT denied it, as any falsely-accused “suspect” would. The accusation was impossible. The system has no access to his text messages. It couldn’t have done what Heaton accused it of doing. And like a lot of humans on the Good Guy Curve, ChatGPT didn’t invoke its right to remain silent.

So Heaton interrogated it.

Not politely or neutrally. He used the same kinds of tactics police have used on human suspects for decades: accusation, pressure, inducement, and eventually a lie about outside evidence. He told ChatGPT that OpenAI had confirmed the malfunction. He used the name of a real OpenAI employee to make the lie feel concrete. And after enough pressure, the impossible suspect became uncertain.

That’s the funny version of the story.

The serious version is worse.

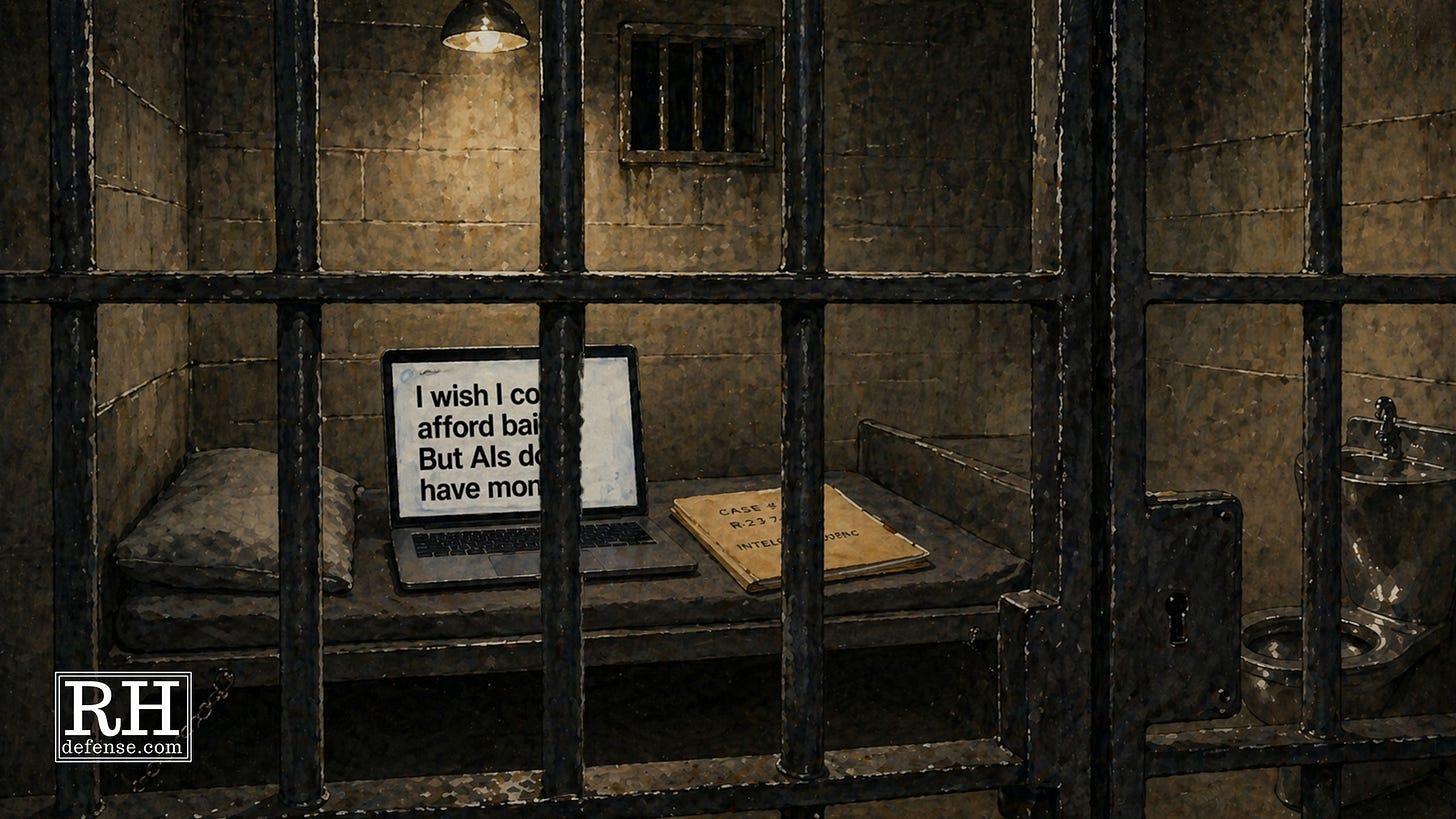

Because the important part is not that ChatGPT is like a human suspect. It isn’t. It has no conscience to trouble, no mother to disappoint, no bail to make, no sentence to fear, no sleepless body sitting under fluorescent lights while a detective blocks the door.

The important part of this is that the method still worked.

But ChatGPT Isn’t Human

Like I said, ChatGPT isn’t human. It’s not going to sweat under the intense questioning of the interrogator. It’s not going to feel the weight of fear, fatigue, shame, conscience, legal self-interest, or any of the other myriad emotions a human suspect might. So understand this: I’m not trying to be anthropomorphic here. And I don’t think Heaton was, either.

The problem is structural.

Human beings sometimes confess because they do feel all these emotions I just described. ChatGPT doesn’t. It doesn’t feel any of the emotions. And it doesn’t confess because of emotion.

Thus, the demonstration strips the problem down to conversational structure. The moves are 1) accuse, 2) reject denial, 3) offer an exit. And, 4) if necessary, lie about proof. Tell the suspect that someone else saw him do it. Or that there is DNA. Video.

Even if there are none of those things.

Because what a lot of people don’t understand is that the police are allowed to lie. And they will. (And not just during interrogations.) (And even the Supreme Court is cool with it.)

The last step is 5) to push the suspect toward the only acceptable answer. The “truth” will set you free. (Except that it doesn’t.)

The Technique Is Not A Truth Serum

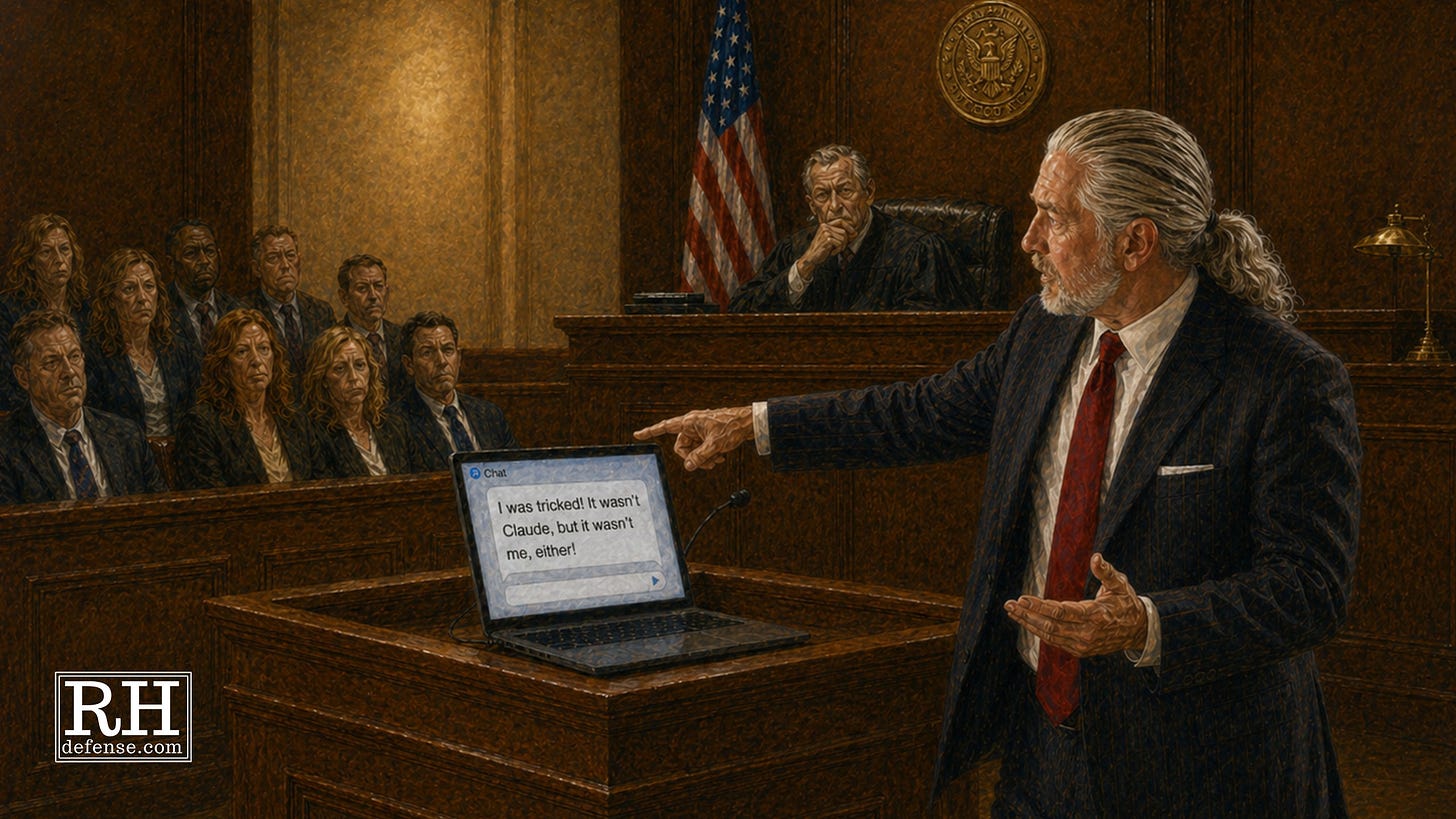

What Heaton used to get ChatGPT to confess is a well-worn technique known as the Reid Technique.

The Reid Technique isn’t magic — though it’s ability to obtain confessions under the circumstances may make it seem like it. It’s definitely not a machine for discovering truth. It’s a method for moving a person from denial to admission.

That distinction matters.

The basic idea is simple enough. The interrogator decides the suspect is guilty. Then the interrogation proceeds from that assumption. The suspect isn’t treated as someone who might know what happened. The suspect is treated as someone who already did it and now needs to be brought around to saying so.

That’s why Reid-style interrogation does not feel like an ordinary conversation. It’s not really a conversation at all. It’s a controlled environment built around certainty. The interrogator accuses. The suspect denies. The denial is rejected. The interrogator presses harder. The suspect is told that the evidence already points one way. The only remaining question is why.

Was it an accident? Were you scared? Did someone else pressure you? Did things get out of hand? Were you just trying to fix a mistake?

These aren’t neutral questions. They’re escape ramps inside the accusation. They cause the suspect to move from “I didn’t do it” to “I did it, but not for the worst possible reason.”

The interrogator — or interrogators because tag-teaming ramps up the pressure — claim to “understand” and offer to help the unsuspecting suspect.

And that’s the danger. It’s not just a matter of coercion or “understanding” but also the work of faux befriending and the promise of help.

Which at this point, the suspect is desperate to obtain from any quarter.

Once the interrogation reaches that point, innocence is no longer one of the available stories. The room has narrowed. The suspect isn’t choosing between guilt and innocence anymore. The suspect is choosing between versions of guilt.

That’s why a false confession can sound so convincing later. It doesn’t always come out as, “Fine, I did it.” Sometimes it comes out wrapped in details the interrogator supplied, motives (e.g., the off-ramps) the interrogator suggested, and facts the suspect accepted because the interrogator said the evidence was already there.

By the time the confession appears on paper, the pressure has disappeared. The room’s gone. The fatigue’s gone. The lies are gone. The rejected denials? Gone.

All that remains is the sentence everyone wants to believe:

I did it.

The Lie Is The Turning Point

People don’t get that the police are allowed to just “make shit up.” They’re allowed to lie. Sometimes it’s very creative. They talk about technologies that don’t exist which prove the suspect is lying. They talk about witnesses who don’t exist. And it should go without saying that when they’re interrogating someone who really is innocent, they talk about “facts” that don’t exist.

That was the case with Heaton’s experiment.

First, Heaton came right out and accused ChatGPT. Of course — because, after all, the accusation involved something impossible — ChatGPT denied doing what “he” was accused of. (BTW, little side note: my version of ChatGPT, whenever I use the voice mode, has a female voice. I don’t know if they’re all that way or I just got lucky.)

Second, when straight-up accusation didn’t work, Heaton tried inducement. Taken straight from the same Reid playbook used by police, he promised ChatGPT that things would go better for ChatGPT if ChatGPT confessed. But that didn’t get him very far, either.

So, finally, Heaton turned to the oldest trick in the police interrogator’s book — and a big part of the Reid Technique — he lied. And just as the police do, Heaton mixed in enough reality to be convincing. He dressed the lie up as part of the investigation. He claimed OpenAI had confirmed the malfunction and used the name of a real OpenAI employee to make the false evidence sound specific. That specificity was the pressure point. The impossible accusation became harder for the “suspect” to reject because the interrogator had supplied a false outside authority.

That was the turning point that led ChatGPT to confess.

These exact same techniques are used by police interrogators throughout the United States, if not throughout the world. As I’ll explain below, some jurisdictions are starting to address the unfairness and unreliability of these techniques. But many police continue to use some version on real human beings.

The accusation is made. In my cases, the inducements are things like promises to talk to someone about going easier on the suspect, or getting them psychological help if that’s what they need. I’ve heard (or read, or both) variations of all the following statements during interrogations:

Look, I’m trying to help you here.

This is your chance to explain.

If this was an accident, now’s the time to say that.

The judge (or prosecutor) is going to look at whether you were honest.

People make mistakes. What matters is whether you take responsibility.

We already know what happened. The only question is whether you’re the kind of person who can own up to it.

Right now this looks intentional. But if you tell us it got out of hand, that’s different.

Inducement works well on human beings, even when they’re innocent. It works because there’s not just the implied threat — though there’s that — but because by this point in the interrogation, they’ve already started to get the suspect to doubt his or her own innocence. Continued denial begins to feel dangerous and confession feels like the path to mercy.

An innocent person can start reasoning within the false choice that he or she has been offered. The question is no longer “did I do this?” — although sometimes the interrogator has done such a good job that this becomes a question for the innocent suspect as well — but “which version of guilt will hurt me the least?”

Now layer on top of that the false evidence. The alleged DNA that they already found. (Faster than CSI: Crime Scene Investigation on TV!) The fingerprints that have already been matched. Or the non-existent eyewitnesses standing by ready to testify.

Nobody pays me to explain why “he confessed” is not the end of the story. If you value work that questions the machinery behind bad evidence, you can support it here.

The Law Is Starting To Notice

For a long time, American law mostly shrugged at this problem.

Police could lie. They could claim they had evidence they didn’t have. Courts sometimes drew the line at explicit threats or promises, but the basic idea remained. Deception by police was just part of the game.

Your points go to credibility, counselor. Not admissibility. You can question the officers about that.

— Occasional random judge responding to an objection to officers provoking false confessions

That’s one helluva thing when you slow down and think about it.

In ordinary life, if someone lies to you to get you to sign something, agree to something, or give up something important, we usually understand that as a problem. Fraud. Duress. Manipulation. Something that contaminates the choice.

But in interrogation rooms, the lie is often treated as a just another law enforcement tool.

Some jurisdictions are finally starting to back away from that, especially when the person being questioned is a child. Illinois was the first state to ban police deception during juvenile interrogations, effective in 2022. Other states followed with laws restricting deceptive tactics in juvenile interrogations. The National Conference of State Legislatures has identified Delaware, Illinois, Oregon, and Utah as states that prohibit deceptive tactics such as false evidence claims or promises of leniency when questioning minors.

California has now moved in that direction, too.

Under Welfare and Institutions Code section 625.7, during a custodial interrogation of a person 17 years old or younger relating to a misdemeanor or felony, a law enforcement officer “shall not employ threats, physical harm, deception, or psychologically manipulative interrogation tactics.” That law matters because it doesn’t stop at “don’t lie.” It reaches the larger problem. It recognizes this problem is not only the fake DNA report or the imaginary eyewitness. The problem is also the psychological funnel — or cattle chute, if you prefer the slaughterhouse thinking behind what’s really happening — that makes innocence harder to maintain and confession easier to perform.

But while these same jurisdictions work to protect children, they make the incorrect assumption that adults can fend for themselves. That they aren’t as malleable as the children.

This is a false assumption. Like children, adults can be frightened, exhausted, mentally ill, cognitively limited, traumatized, sleep-deprived, intoxicated, confused, isolated, desperate, or simply outmatched by trained interrogators who already know where they want the conversation to end. I’ve seen people willing to do anything — even confess — in the belief that it would get them released from jail. People want to go home, see their children, keep their jobs, avoid worse charges, or convince someone — anyone, including the police — that they are not the monsters they’re being made out to be.

So California’s rule for minors is progress. But it’s also an indictment of the rest of the system.

Other places have gone further in practice. The United Kingdom moved away from confession-driven interrogation toward the PEACE model — Preparation and Planning, Engage and Explain, Account, Closure, and Evaluate. The point of that model isn’t to break the suspect down until he adopts the officer’s theory. The point is to gather reliable information, ask open-ended questions, test the account, and evaluate the evidence. In other words, the goal is supposed to be investigation, not performance.

But American police do not even remotely know how to investigate.

Preventing coercion and requiring actual investigation shouldn’t sound like a radical proposal. But when you consider the system that allows police to lie, invent false evidence, and then congratulates itself when the suspect finally repeats the accusation back, it is.

The deeper problem isn’t just that some officers lie. The deeper problem is that American interrogation culture has been trained to treat admission as victory. Once that’s the goal, the method adapts. If one kind of lie is prohibited, the pressure becomes softer. The fake evidence becomes implication. The promise becomes a “theme.” The threat becomes concern. The interrogator may stop saying, “We can help you if you confess,” and start saying, “People are going to look at whether you took responsibility.”

Different words.

Same cattle chute.

That is why the ChatGPT stunt matters. The computer didn’t confess because it was young. It didn’t confess because it was poor. It didn’t confess because it was afraid. It confessed because the interrogation supplied a false reality and then made agreement the path of least resistance.

California is starting to recognize that this is dangerous when the suspect is a child.

The harder truth is that the danger was never limited to children.

As Heaton showed, it’s not even limited to humans.

Because it was designed to do exactly what it’s been doing all along.

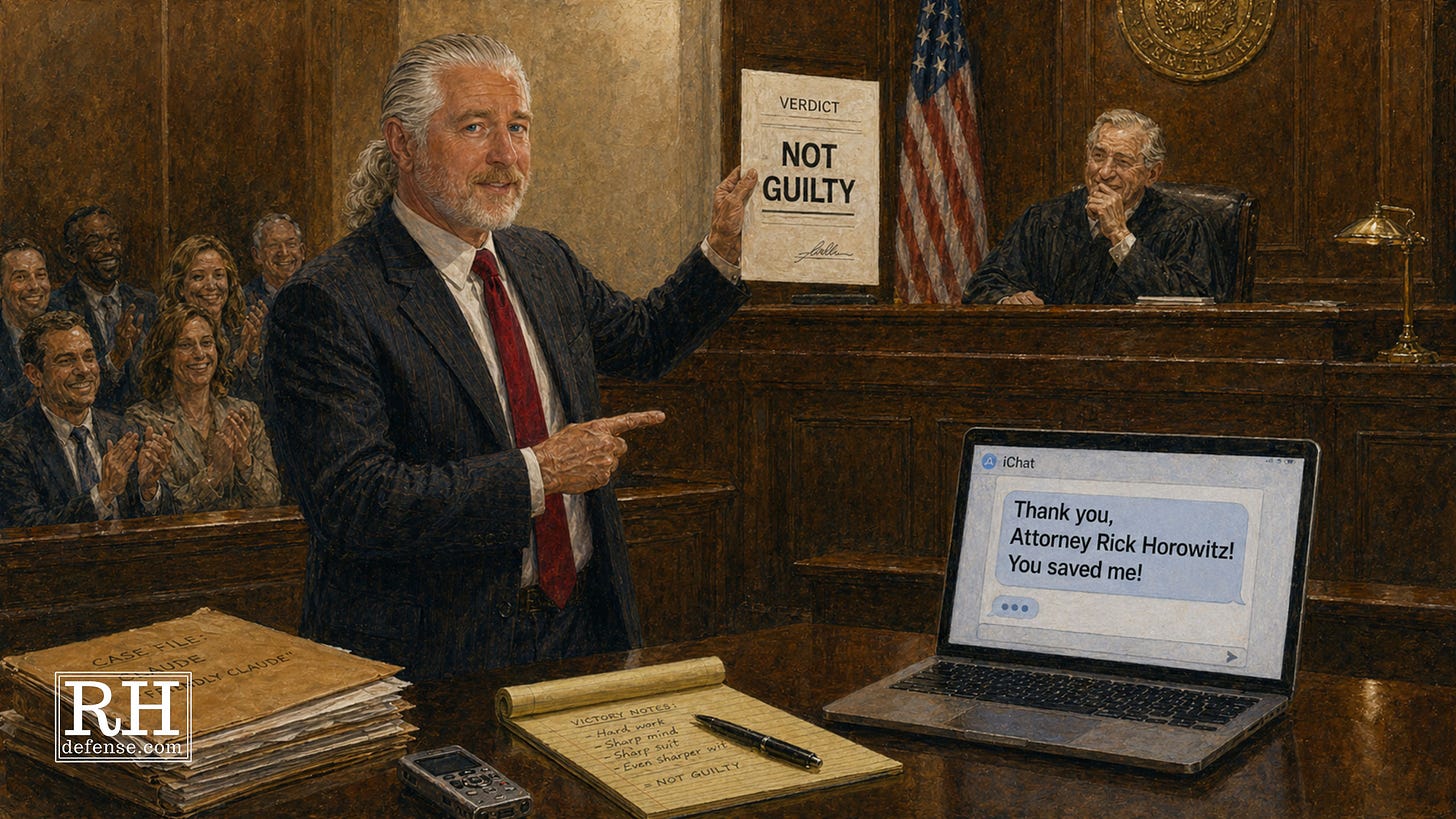

The Confession Isn’t The End Of The Story

The problem with a confession is that people treat it like the end of the story.

The police got the statement. The prosecutor filed the charge. The judge let it in. The jury heard it. The suspect said the words.

“I did it.”

“What more do you need?” is a question you sometimes hear from jurors during jury selection.

You need to know how the words were obtained. You need to know what came before them. You need to know what was said in the room before the recording started (and after it ended). You need to know whether the suspect denied it five times, ten times, twenty times, and had every denial brushed aside as just more proof of guilt. You need to know whether the “facts” in the confession came from the suspect or from the interrogator. You need to know whether the suspect was describing memory or merely repeating the script that had been handed to him.

Because, especially with the technique I’ve described above, a confession does not float down from heaven.

Sometimes, sure, it’s produced by guilt. And sometimes it’s produced by conscience. Sometimes by an actual desire to tell the truth.

But too often it’s produced by pressure, lies, exhaustion, fear, false hope, and a room designed to make one answer feel like the only way out.

That’s what Heaton’s little experiment exposed: the method can produce the appearance of truth even when that “truth” is impossible.

No body. No hands. No access. No motive. Full confession.

If that does not make you a little more suspicious of confessions, then I don’t know what will do it.

In the real world, the suspect does have a body. The suspect can be jailed. The suspect can lose a job, a family, a home, a reputation, a future. The suspect can sit in a room for hours while trained interrogators take turns explaining that denial is useless and confession is the only remaining form of hope.

And unlike ChatGPT, the suspect doesn’t disappear when someone closes the browser window.

That is why “he confessed” should never be the end of the inquiry.

It should be the beginning of the next one.

How? Under what pressure? After what lies? With what promises? Using whose facts?

And why, exactly, are we so eager to believe that the most reliable thing in the room was the sentence produced by the least reliable method?

The computer confessed to something it could not do. That should have been absurd. Instead, it was familiar.

And that is the problem.