Automation Cosplay

How the Courts Borrow the Aesthetic of AI to Paper Over Human Bias

Every morning in Madera County begins with a ritual of institutional theater.

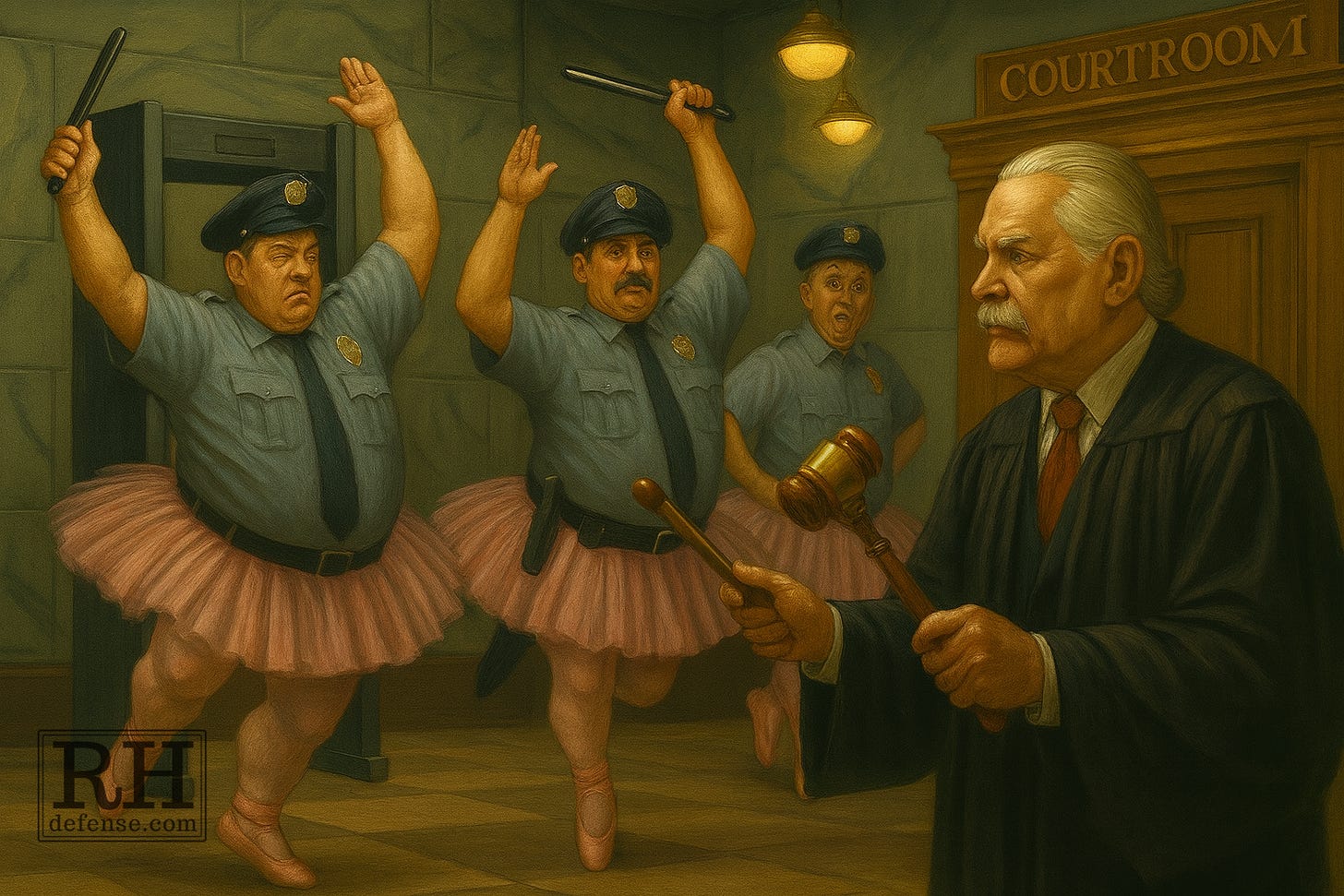

The guards — not deputies, just rent-a-cops — bark their commands like cross-breeds between TSA understudies and a junkyard dog in a courthouse off-Broadway production. Watch off. Belt off. Laptops powered up. iPads, too. They peer in bags and poke through papers as if the performance itself keeps the place safe.

I call it “Security Theatre”, and the show never ends.

You can smuggle in a gun by accident. (Yes, I know lawyers with concealed carry permits who “forgot” and managed to get their guns through. Or, at least, they’ve told me that.)

But God help you if your watch beeps.

And in Madera, they wand you even after you pass the metal detector, just to make sure everyone knows the ritual matters more than the reality.

That’s how most of our justice system works. Not just at the courthouse door, but on the bench itself. The modern courtroom has turned performance into proof — in this case not of safety, but of fairness.

The modern courtroom has turned performance into proof — not of safety, but of fairness.

And now, with the arrival of AI “risk assessments” and “data-driven” sentencing, the play has a new act.

I call this “automation cosplay”.

The courts don’t use AI to make better judgments; they use it to make judgments look better — to pretend that bias has been replaced by math, or something even more objective (I know; I know; that’s my point). Judges order risk-assessment reports and ignore them when they disagree, but the act of ordering one is enough to sanctify the result. The ritual has been observed. The robes stay clean.

The algorithms don’t actually decide anything. But they give the illusion that someone objective has. That’s not technology; it’s theater — the aesthetic of AI draped over the same old prejudice.

Some time ago, in a different context, I quoted United States v. Cronic. It’s chronically used when writing briefs relating to the ineffective assistance of counsel.

[I]f the process loses its character as a confrontation between adversaries, the constitutional guarantee [to the effective assistance of counsel] is violated. As Judge Wyzanski has written: “While a criminal trial is not a game in which the participants are expected to enter the ring with a near match in skills, neither is it a sacrifice of unarmed prisoners to gladiators.”

— United States v. Cronic, 466 U.S. 648, 656, 104 S.Ct. 2039, 80 L.Ed.2d 657 (1984).

Just like what happens when you try to enter the Madera courthouse.

The difference between Madera’s security line and what happens in the courtroom is only a matter of costume and vocabulary. Downstairs, they act out safety. Upstairs, they act out reason.

The actors change — judges instead of guards, probation officers instead of screeners — but the script is the same: follow the procedure, invoke the tool, claim neutrality.

And these days, we’re transforming everything into a game where skills are irrelevant, in order to sacrifice unarmed prisoners to gladiators and our dignity to a farce.

When a judge orders a risk-assessment report, everyone in the room understands it’s part of the ritual. The report will come back stamped with guesses and categories that sound scientific. “Low,” “moderate,” or “high” risk — like the language of weather forecasts pretending to predict human behavior.

Then the judge reads it, nods, and does whatever they planned to do anyway.

If the report recommends release, it’s “just an algorithm.” If it recommends detention, it becomes “objective evidence.” The magic is in the timing, learning to embrace confirmation bias, and believing in the machine only when it agrees with you.

That’s what I mean by automation cosplay. The court borrows the aesthetic of AI — the data, the metrics, the procedural language — to dress up old instincts in new clothes.

And everyone goes along with it, because pretending we’ve outsourced judgment feels cleaner than admitting how much of it is still personal, emotional, and arbitrary.

When I say “everyone”, I mean you. Me. We.

Yes, we. We created the cauldron of legal fiction that results in the the ever-changing, never-changing story of innocent men going to prison, with some eventually being exonerated. We are responsible for a criminal law system gone awry. We with our certainty that everything has simple answers.

— Rick Horowitz, One Big Cauldron of Legal Fiction (March 20, 2016)

We’ve done all this together. Because justice is hard and handing our prejudices and biases over to AI makes it just a little simpler.

The real danger of automation cosplay isn’t that the system uses tools it doesn’t understand. It’s that we start believing it does. It’s dangerous because cosplay is just that — putting on costumes and playing at justice. But the emperor has no new clothes.

Judges learn to speak the language of objectivity fluently — “validated instruments,” “evidence-based practices,” “quantitative assessments” — but fluency isn’t comprehension. It’s performance. The words sound technical enough to feel true.

In psychology, they call that the illusion of explanatory depth: we think we understand something because we can repeat its vocabulary. The courtroom just gives it better lighting.

Risk-assessment algorithms tell us a defendant is a “moderate risk” for failure to appear, as if “moderate” were a measurable trait like temperature or blood pressure. Lawyers argue over decimals that never existed in the first place. Probation officers treat predictions as facts. And judges, who once trusted their gut, now trust their gut dressed up as data.

The algorithm doesn’t remove bias. It codifies it — then translates it into a dialect of math that sounds like reason.

That’s what makes this act more dangerous than the first. Security theater at least keeps its absurdity in plain view. Automation cosplay hides it behind a dashboard.

At least in the lobby, everyone knows it’s a show. No one actually believes the rent-a-cops are saving lives with their wand choreography. But upstairs, the performance gets reverent. Everyone bows to the script.

When the robe and the report agree, it doesn’t mean reason has prevailed; it only means the mystery has been rebranded. We once called it faith. Now we call it “data.”

The second reason for not relying on the Oracle, I once wrote,

is that no one knows how AI — particularly LLMs — work. Even those who ‘program’ them. The ancient Oracle entered trances induced by mysterious fumes from the depths of the cavern below the Temple. Our modern AI Oracle driven by programmatic fumes to function acts in mysterious ways.

— Rick Horowitz, From Fumes to Function: How One Lawyer (Me!) Uses Artificial Intelligence (August 31, 2024)

The courts have begun to inhale those same programmatic fumes. We built these systems, we trained them, but we don’t understand what they’re doing. And still we breathe deeply, mistaking opacity for wisdom, complexity for comprehension.

The courtroom still runs on faith, only now the robes themselves wear the vestments of technology. The algorithm becomes the Oracle, the judge the interpreter, and the defense lawyer the heretic for asking if any of it makes sense.

When the robe and the report agree, it doesn’t mean reason has prevailed; it only means the mystery has been rebranded.

I’ve spent most of my career interrupting that ritual. Not because I hate order, but because I know how easily ritual turns into justification. When the process becomes the proof, truth doesn’t stand a chance.

As my friend, Scott Greenfield — who thinks “Em dashes are for people who are too lazy to use proper punctuation” — once reminded us wrenches-in-the-works:

But if you step foot in the well on behalf of a criminal defendant and have any hesitation in doing whatever is necessary to zealously represent your client[’]s interests within the bounds of the law. There is no pretty pink bow, no facile excuse, no ABA Model Rule, that will justify you putting your social justice feelings ahead of your duty to your client.

You are not a criminal defense lawyer. You are a disgrace.

No matter what your personal values, and how passionately you feel about them, and how many people rub your tummy because they, like you, feel deeply, when you walk into that courtroom as a criminal defense lawyer, your only duty is to defend your client.

— Scott Greenfield, The Criminal Defense Lawyer’s Duty, Social Justice Version (April 7, 2017)

So I still stand in that Madera security line every morning, watching the metal detectors hum and the guards act out their parts, and I remind myself: this isn’t justice. It’s theater.

And if no one else remembers that, I’ll play the only role left: I’ll be the guy who refuses to clap at the end of the show.

Keep refusing to clap, Rick!!

You said it. Keep saying it. Cause it’s so true. Just thinking of the presumption of guilt for bail ritual that was in place for over a hundred years until the California Supreme Court said they’d had enough of that last year in Harris.